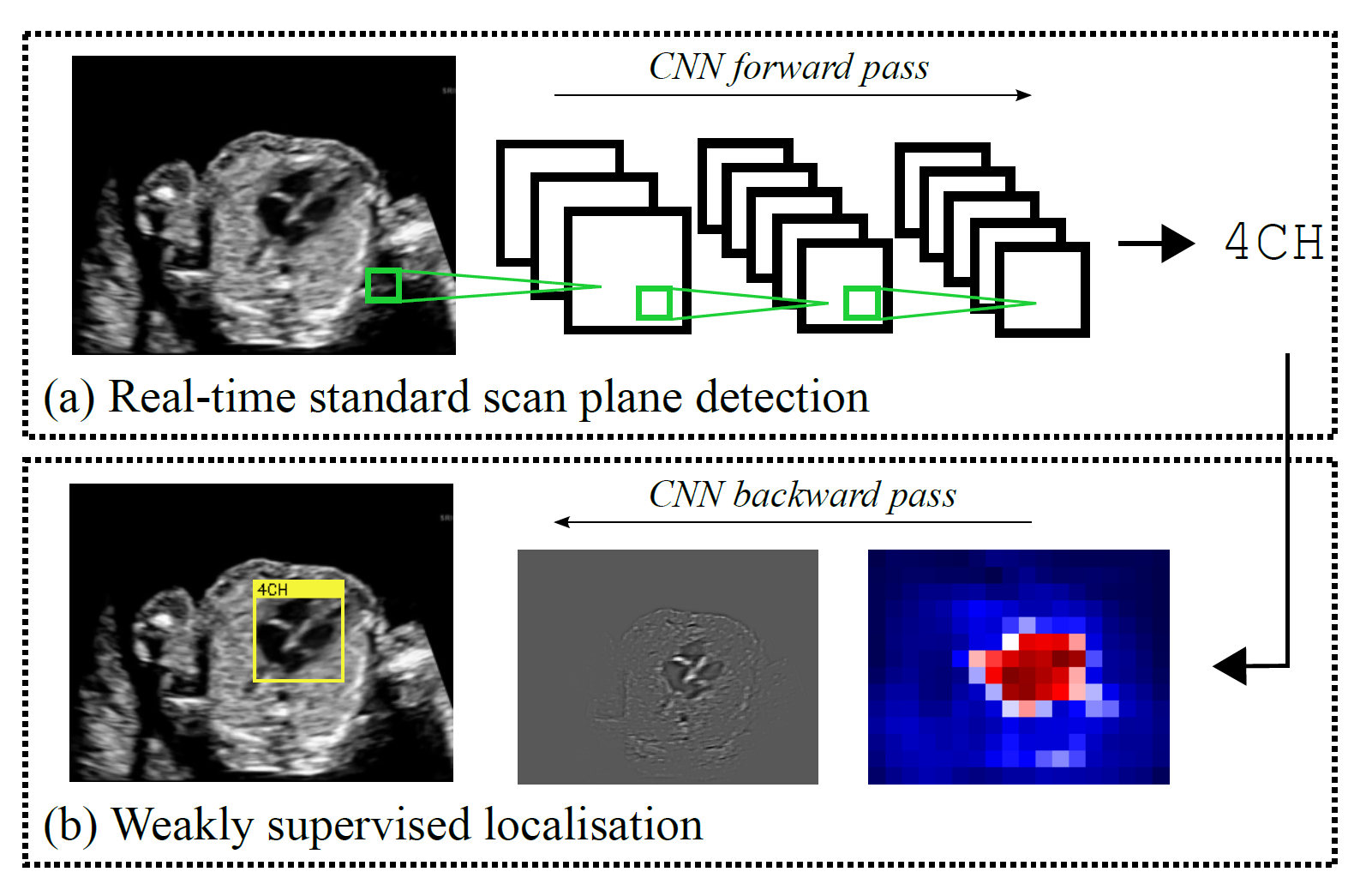

Identifying and interpreting fetal standard scan planes during 2D ultrasound mid-pregnancy examinations are highly complex tasks which require years of training. Apart from guiding the probe to the correct location, it can be equally difficult for a non-expert to identify relevant structures within the image. Automatic image processing can provide tools to help experienced as well as inexperienced operators with these tasks. In this paper, we propose a novel method based on convolutional neural networks which can automatically detect 13 fetal standard views in freehand 2D ultrasound data as well as provide a localisation of the fetal structures via a bounding box. An important contribution is that the network learns to localise the target anatomy using weak supervision only. The network architecture is designed to operate in real-time while providing optimal output for the localisation task. We present results for real-time annotation, retrospective frame retrieval from saved videos, and localisation on a very large and challenging dataset consisting of images and video recordings of full clinical anomaly screenings. The proposed method annotated video frames with an average F1-score of 0.86, and obtained a 90.09% accuracy for retrospective frame retrieval. Moreover, we achieved an accuracy of 77.8% on the localisation task.

The SonoNet framework and models are available for download on github.

Videos about this approach: